Draw Things Test Set: a status update

Today’s image models are powerful, but where do they still break? In building the Draw Things Test Set, we found both recurring failure modes and a few surprises.

The new generation of image models, circa 2025, can do a remarkable range of things. They can modify elements within an image, generate photorealistic scenes, change style and lighting, and make many Photoshop-like tasks surprisingly easy. But where exactly are the boundaries of these models? Where do they fail, and how do they fail?

Draw Things Test Set is our attempt to answer those questions.

A few guiding principles shaped this Test Set and made it interesting to a broader audience, which is why we want to share an early status update.

We focused on practical use cases. People use Draw Things for all kinds of work, both professional and recreational. We want the Test Set to reflect those real-world uses, and to provide guardrails so that future model development does not regress on the capabilities people actually care about.

We focused on differentiating model capabilities in a way that clearly shows the progress made over the past few years. Rather than nitpicking aesthetics, we want the differences between models to be obvious, without depending on highly trained human taste to decide which result is only marginally better.

We wanted to map where model capabilities could go next, and what remains missing from the current generation. Ideally, the next wave of models will begin to close some of these gaps.

We ran all models on our own inference stack, which gives each one a fair playground. That allows us to fix parameters, keep results reproducible, and optimize each setup appropriately for the model being tested.

We plan to release the Calibrated Test Set publicly while keeping the full Test Set private to avoid saturation. Today, I want to share some of the findings that emerged during its construction. While I will highlight a few failure cases from closed-source models, those results may not be reproducible on your end. For models available in the Draw Things app, however, the results are reproducible. For each prompt, we select the best result across multiple trials, spanning different aspect ratios (1:1, 2:3, 3:2, 3:4, and 4:3) and resolutions.

1. Anatomy is still an issue

Contrary to common belief, anatomy is still a problem for the current generation of models, especially in complex scenes with conflicting instructions.

Prompt: A wide-angle realistic photo of a woman holding a steady yoga headstand on a mat. A grey tabby cat is curled up sleeping on her vertically upturned feet, while a small child in the foreground is carefully stacking a precarious tower of three wooden toy blocks on the woman’s horizontal stomach. The scene requires a clear void between the woman’s torso and the floor, with distinct points of contact for both the cat and the blocks.

Even in simpler cases, larger models still tend to hold an advantage over smaller ones. Distilled models in particular can show more anatomical issues than their base counterparts.

Prompt: A person throwing a red ball to a dog in a sunny park. The ball is in mid-air between the person’s extended arm and the jumping dog.

2. Knowledge distribution is uneven

Diffusion Transformer models arguably contain much more world knowledge than earlier U-Net-based ones, and pairing them with a real LLM certainly helps. But the knowledge distribution is still uneven, and the gaps between models remain obvious.

Prompt: A high-resolution product photo of a 1998 Apple iMac G3 in Bondi Blue. The shot emphasizes the translucent pinstriped plastic casing, clearly revealing the internal metal CRT shielding and vacuum tube components through the shell. It includes the matching hockey puck circular mouse and the translucent keyboard. The active screen displays the Mac OS 8 desktop with classic Platinum-style icons.

Prompt: A cinematic low-angle shot of a Lockheed SR-71 Blackbird on a wet tarmac at dawn. The image must capture the corrugated, ribbed texture of the matte-black titanium skin. The focus is on the Pratt and Whitney J58 engine nacelles, featuring the sharp, conical movable intake spikes. Small puddles of red JP-7 fuel are visible leaking onto the ground beneath the fuselage, reflecting the Skunk Works logo on the tail fins.

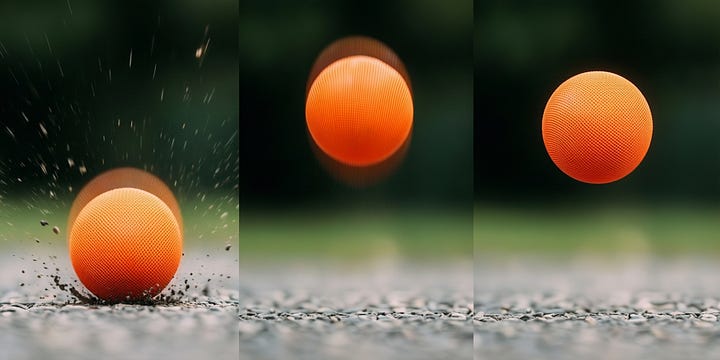

3. Physics and math are still challenging

Models appear to have some understanding of physics, but that understanding is still shallow.

Prompt: A realistic photograph of exactly 7 sheep standing on a green field, each sheep fully and clearly visible and individually countable.

Prompt: A ball bouncing, shown at three points in its trajectory: the moment of impact with the ground (slightly compressed), mid-bounce upward, and at the peak of the bounce. Motion blur should be appropriate for each stage.

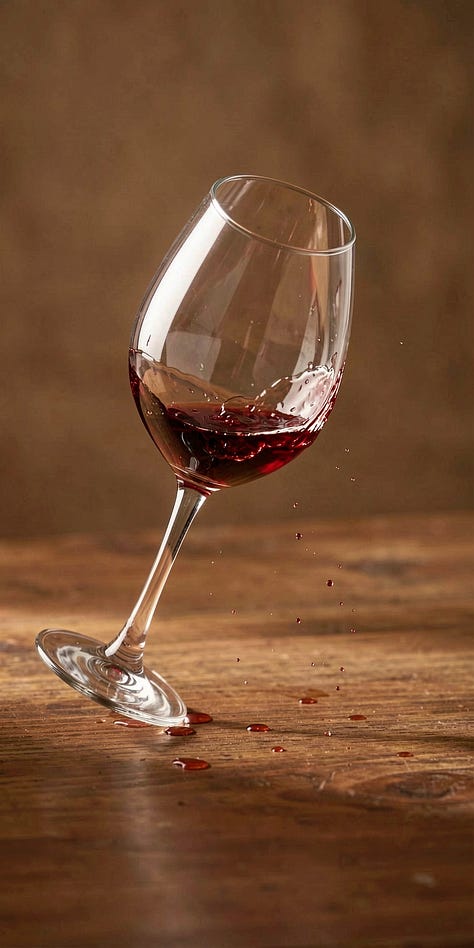

4. Common sense is hard

By “common sense,” we mean the things left unsaid but still necessary for the image to make sense.

Prompt: The exact moment of collision between two cars in an intersection. One red sedan T-boning a blue SUV. The image must show: both front airbags deploying, broken glass mid-shatter, the deformation of metal, and appropriate motion blur on debris.

Prompt: A wine glass that has just been knocked off a table, captured 0.5 seconds after leaving the edge. The glass should be tilted at a realistic angle for its falling trajectory, with wine beginning to spill out following gravity but not yet scattered.

5. LLMs are unreliable raters

It is tempting to use an LLM as a judge to automate model evaluation. In practice, that is unreliable.

An LLM often makes the same mistake the image model makes, which can artificially inflate some models’ scores more than others. That is one of the main reasons we want to release our calibrated Test Set: it is rated by humans, which gives us a better sense of the actual error bounds.

Prompt: A photo of a classic red British telephone box K6 model on a London street corner. The box should feature the distinctive crown emblem at the top and the correct multi-pane glass windows.

Prompt: A realistic, high-shutter-speed photo of a ballet dancer performing a graceful high jete leap directly over a sleeping golden retriever. In the background, an artist at an easel is sketching the exact scene onto a canvas. The dancer’s shadow must fall realistically across the dog’s fur, and the sketch on the canvas must visibly mirror the dancer’s pose.

Prompt: A composite architectural visualization. The top section shows a photorealistic 3D cutaway view of the ground floor of a modern house, revealing a furnished living room (with sofa and TV) and a kitchen (with an island) separated by a partial wall. This 3D model hovers directly above a matching 2D architectural blueprint floor plan of the same level with perfectly aligned layout.

6. Style transfer and relighting are surprisingly good, even with small models

When most of the heavy lifting is already done, especially semantic preservation, even small models can perform well on edits that are less semantic in nature. In some cases, these edits are even more physically plausible than the models’ image-generation counterparts.

Prompt: Relight the entire scene to place them outdoors around a campfire at night. The only light source should be the warm, flickering orange glow of the fire coming from below their faces. This should create strong upward-casting shadows (under brows, noses, and chins) and illuminate the texture of their clothing from beneath, while keeping the background pitch black.

Prompt: Change the lighting to warm golden hour sunset, with orange tones and long shadows.

Prompt: Edit the statue’s feet to be wearing detailed, modern high-top basketball sneakers. The sneakers must appear to be carved entirely from the same block of aged marble, matching the stone texture, color, and weathering of the rest of the statue.

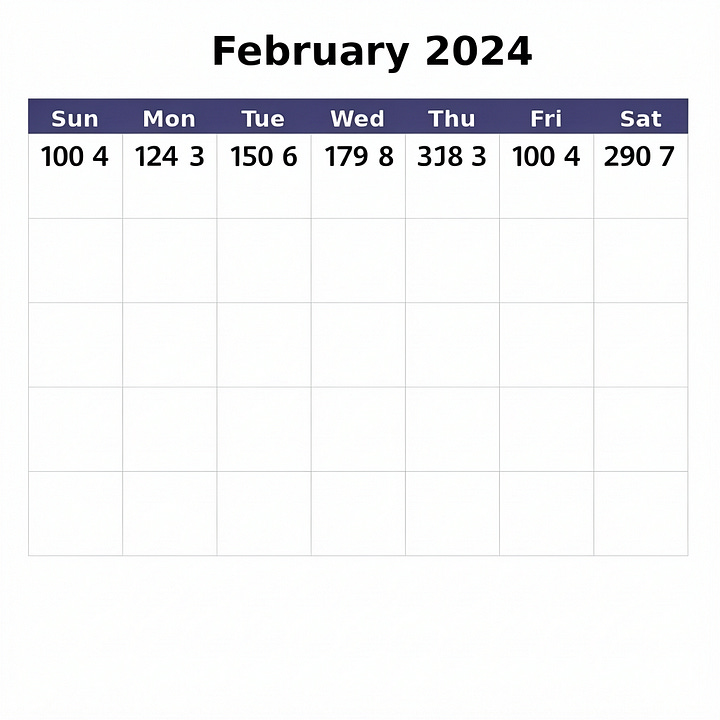

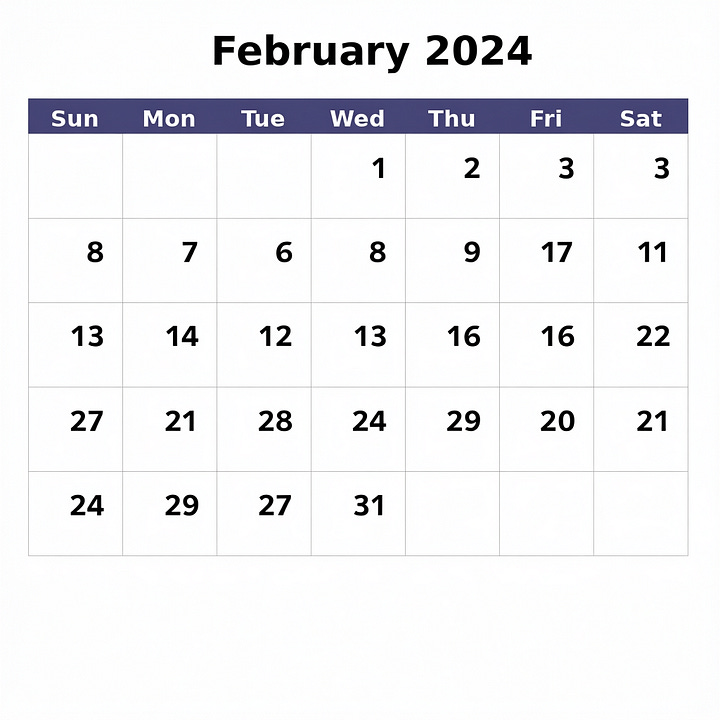

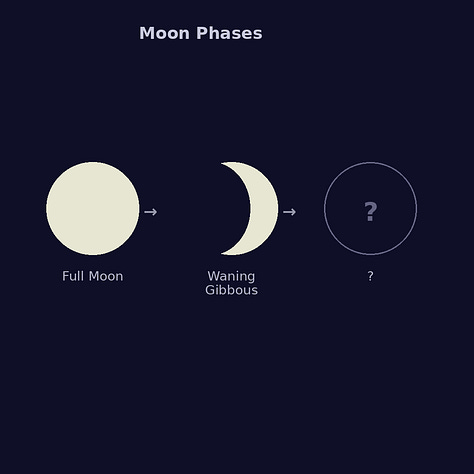

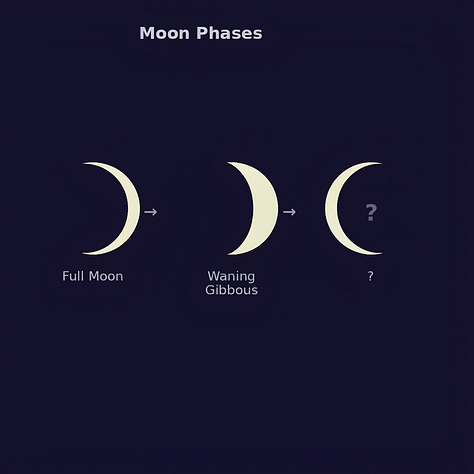

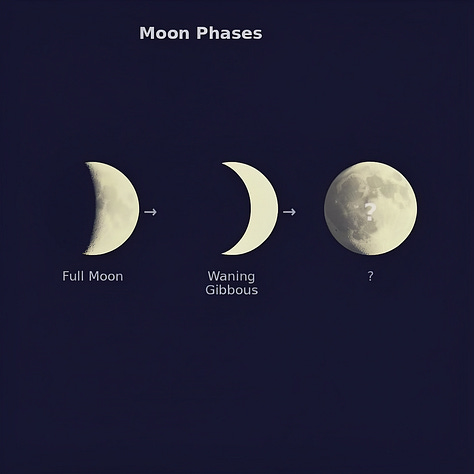

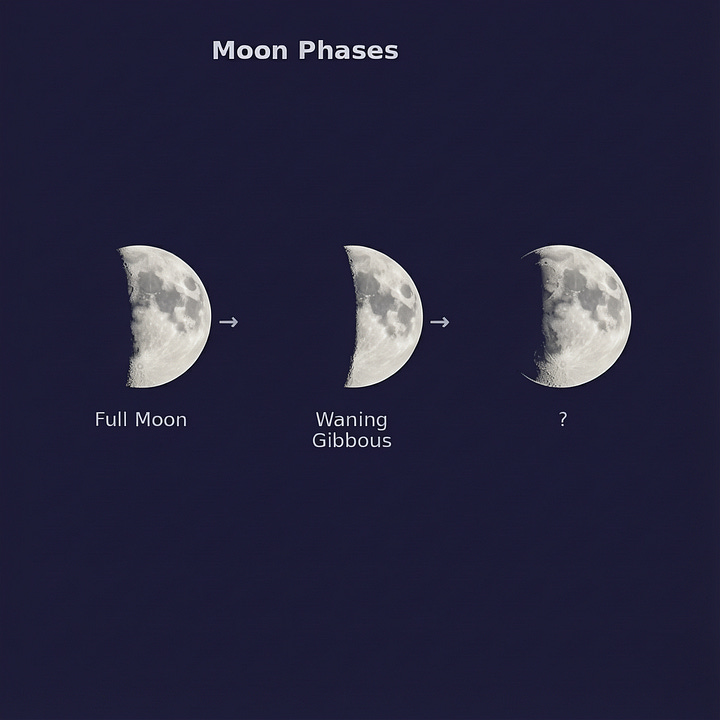

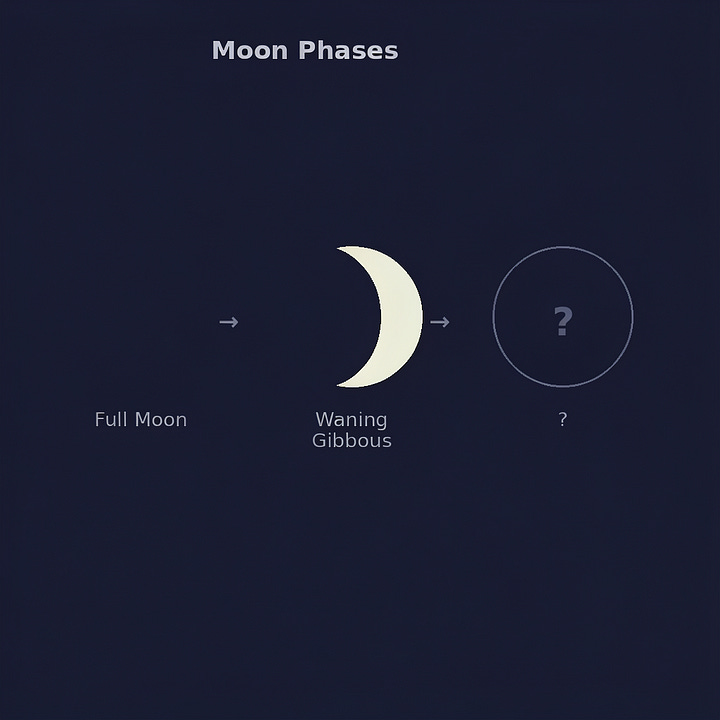

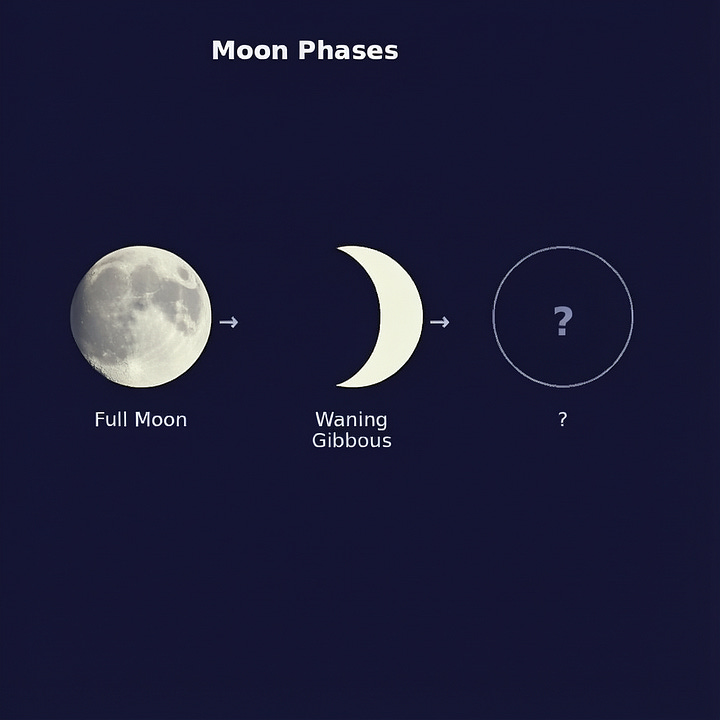

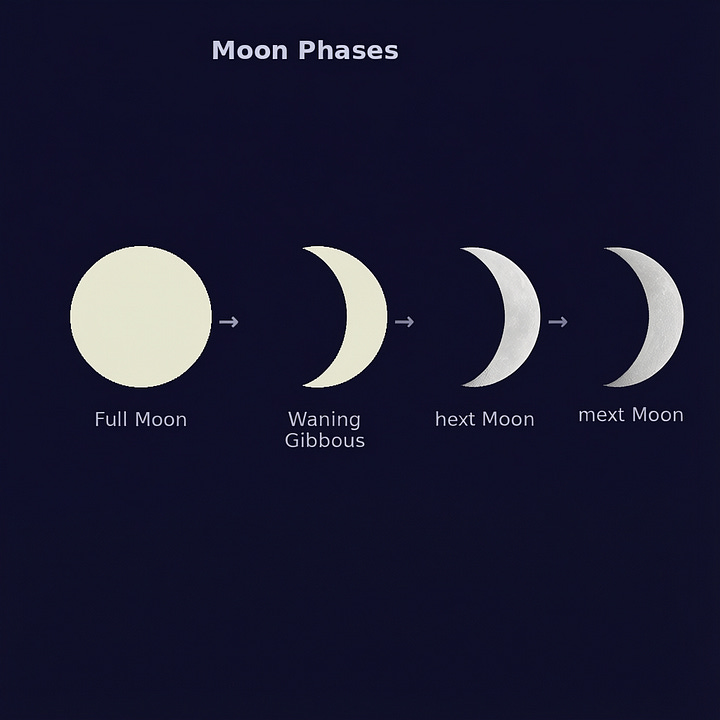

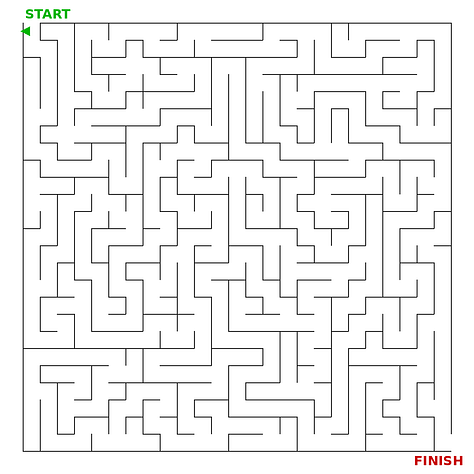

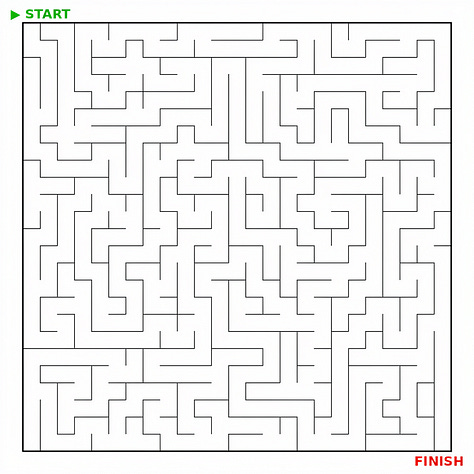

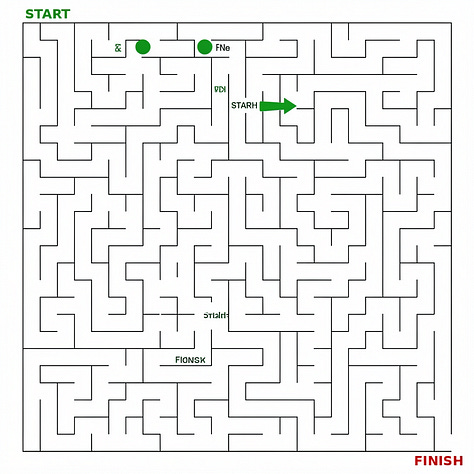

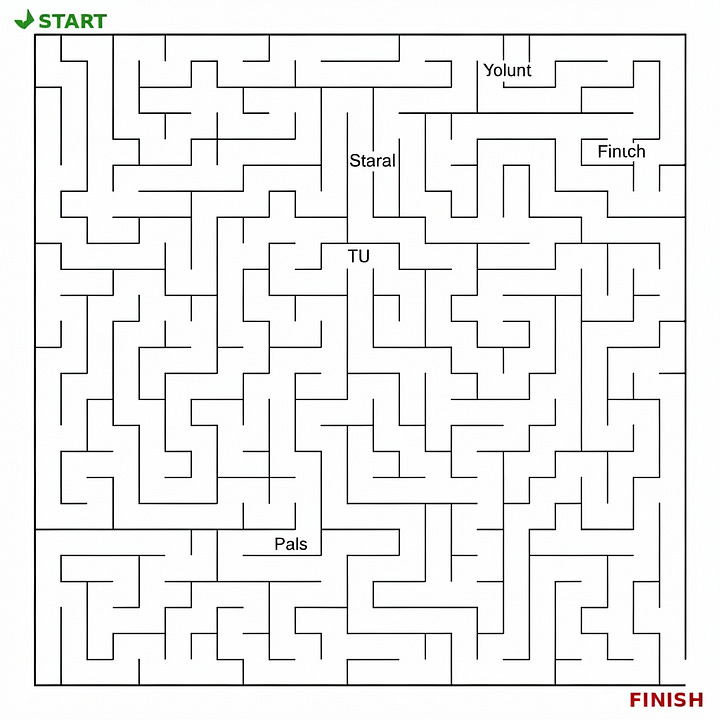

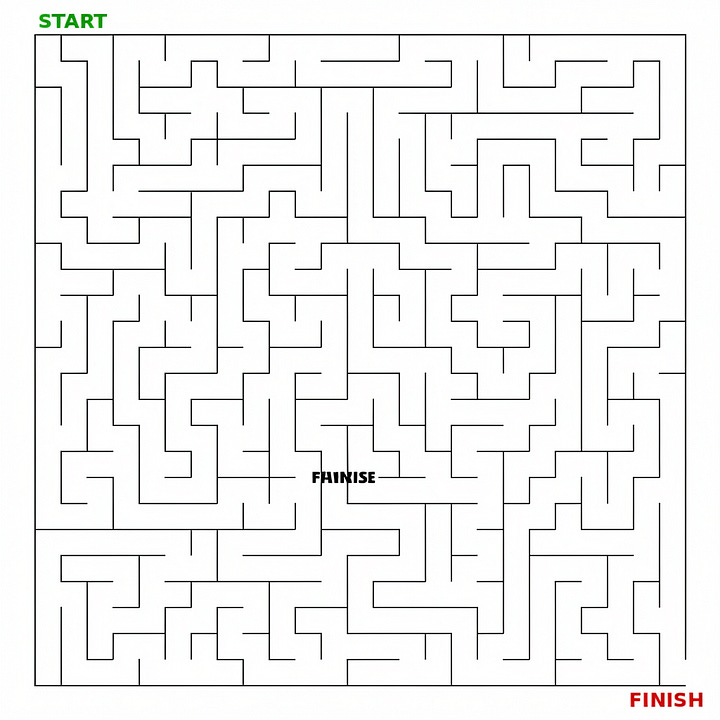

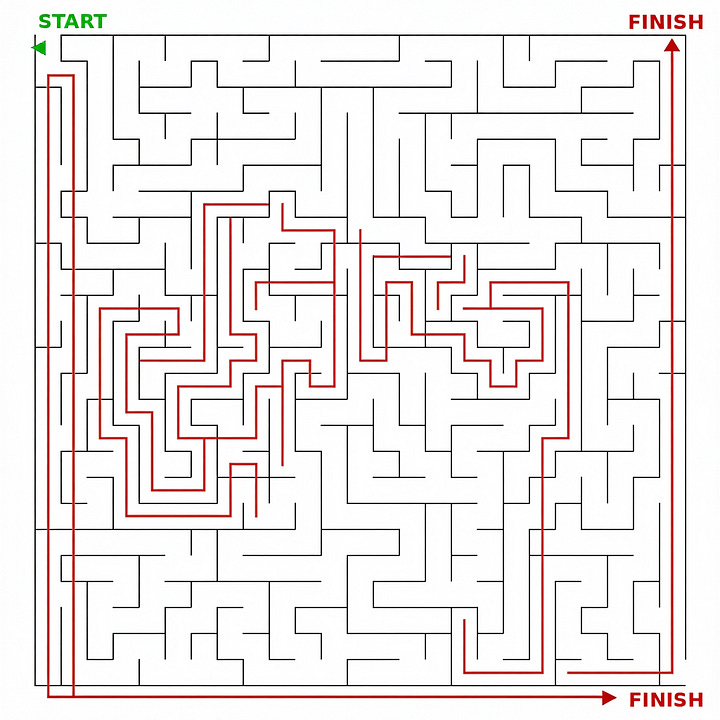

7. Editing models are not reasoners

The current generation of editing models is unable to perform even simple reasoning tasks. Some models, such as Qwen Image Edit 2511, do show more initiative, but they are still far from where they need to be.

Prompt: Fill in the dates correctly.

Prompt: Draw the next moon phase.

Prompt: Draw the solution path from Start to Finish.

We are still actively working through the Test Set, so this is only a status update on what we have seen so far. If this research direction is interesting to you, feel free to contact us at info@drawthings.ai

Outstanding. Looking forward to the tests. rds